tl;dr

GPT-3 edit capability works like an input-function-output process.

Not only can you verbally instruct GPT-3 to transform inputs, but you can tell it to evaluate python functions!

Introduction

Recently OpenAI released an update to the GPT-3 playground for editing and inserting text. This update brings a new layer of controls to what GPT-3 will do and modify. For example, we can provide an input text and a command and let GPT-3 transform the input text into an expected output. Pretty magical!

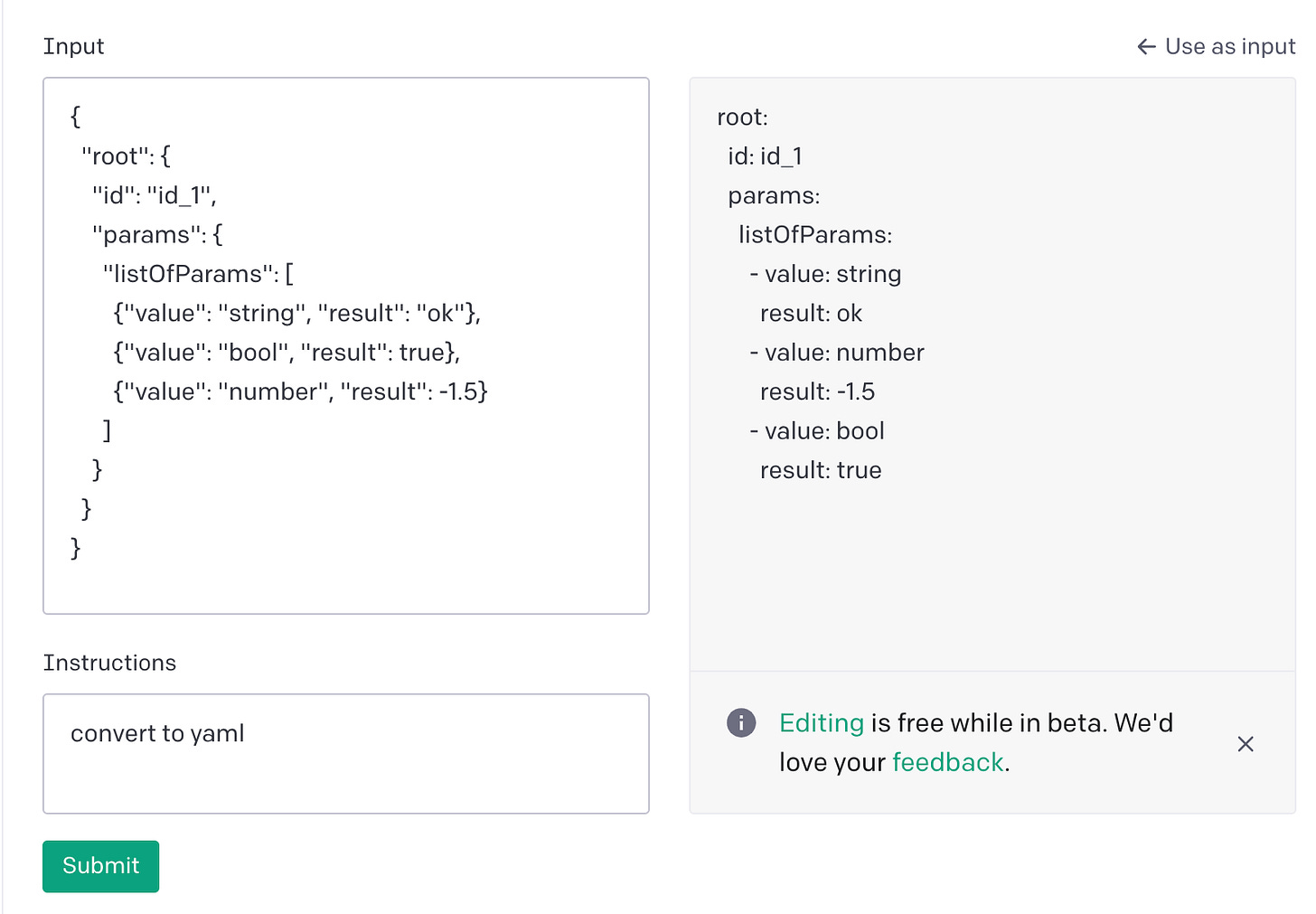

From their example, we can convert JSON to YAML.

Input-Function-Output

The way the UI is structured piqued my interest. There are main three sections: input, instruction, and output. As a programmer, this looked oddly familiar; it looks like a function that will take in some input and return some output. Maybe I get GPT-3 to act as a universal function?

Let’s throw some instructions at GPT-3 and find out!

Boolean functions

GPT-3 can perform a true/false instruction for a specific and straightforward command like palindrome and squared numbers.

# Input

Input: 123, Output:

Input: 121, Output:

Input: 111, Output:

Input: 123454321, Output:

Input 123123, Output:

# Instruction

Output true if input is a palindrome

# Output

Input: 123, Output: false

Input: 121, Output: true

Input: 111, Output: true

Input: 123454321, Output: true

Input 123123, Output: falseSimple statistics functions

GPT-3 can perform simple statistics to a certain degree. For example, it seems to understand how to find a sum, mean, median, and mode.

# Input

Input: 1, 4, 5, 6, 2, 1, 1

Output:

# Instruction

Output is the mean of the input

# Output

Input: 1, 4, 5, 6, 2, 1, 1

Output: 2.28571428571However, as the value of each input gets larger, the accuracy of the answer starts to deviate. (More on this point later)

# Input

Input: 50, 55, 12, 10, 55, 22, 11, 53, 55

Output:

# Instruction

Output is the mean of the input

# Output

Input: 50, 55, 12, 10, 55, 22, 11, 53, 55

Output: 35.55555555555556 (correct answer is 35.8888...)List transformation functions

GPT-3 can apply a command to update a list of numbers. Addition, subtraction, multiplication, and division return the correct answer as long as the numbers are small.

# Input

Input: 58, 18, 96, 28, 83, 85, 91, 84

Output:

# Instruction

Output is each value plus 10

# Output

Input: 58, 18, 96, 28, 83, 85, 91, 84

Output: 68, 28, 106, 38, 93, 95, 101, 94Data lookup functions

GPT-3 can look up a value from a lookup table. (I seeded the first answer; this seems to help GPT-3 get the correct answer. More on this later)

# Input

Input: 4, Output: 3

Input: 3, Output:

Input: 23, Output:

Input: 30, Output:

Input: 8, Output:

# Instruction

Output is the nth fibonacci number

# Output

Input: 4, Output: 3

Input: 3, Output: 2

Input: 23, Output: 28657

Input: 30, Output: 832040

Input: 8, Output: 21Algebraic functions

This class of functions is where things start to get more interesting. I purposely kept the instructions in written English above, but GPT-3 has no problem understanding simple algebraic formulas.

# Input

Input: 50, Output:

Input: 12, Output:

Input: 6457, Output:

Input: 43, Output:

Input: 1.5, Output:

Input: -1, Output:

# Instruction

f(x) = x+3

Output is f(input)

# Output

Input: 50, Output: 53

Input: 12, Output: 15

Input: 6457, Output: 6460

Input: 43, Output: 46

Input: 1.5, Output: 4.5

Input: -1, Output: 2GPT-3 struggles with large numbers, decimal numbers, and negative numbers. When used it returns answers that are close but often incorrect.

# Instruction

f(x) = 2x+3

Output is f(input)

# Output

Input: 50, Output: 103

Input: 12, Output: 27

Input: 6457, Output: 12913 (correct answer is 12,917)

Input: 43, Output: 89

Input: 1.5, Output: 5.5 (correct answer is 6)

Input: -1, Output: -3 (correct answer is 1)Python functions

Stepping the abstraction up a notch, GPT-3 can run python functions. Below I tested its ability to understand conditionals, loops, recursion, multiple parameters, multiple returns, and multiple functions.

Conditionals

GPT-3 had no problems with conditionals. It was even able to understand pseudocode in a pythonic block. For example, it understood a conditional like if isOdd(x): without needing to see the implementation of the method.

# Input

Input: 50, Output:

Input: 12, Output:

Input: 6457, Output:

Input: -1, Output:

Input: 31, Output:

Input: 30, Output:

# Instruction

def f(x):

if x > 30:

return "too large"

else:

return x + 3

Output is f(input)

# Output

Input: 50, Output: too large

Input: 12, Output: 15

Input: 6457, Output: too large

Input: -1, Output: 2

Input: 31, Output: too large

Input: 30, Output: 33Loops

For simple loops, GPT-3 works correctly. It can understand a fixed iteration loop as well as a parameterized loop.

# Input

Input: 50, Output:

Input: 12, Output:

Input: -1, Output:

Input: 31, Output:

Input: 30, Output:

# Instruction

def f(x):

y = 0

for i in range(3):

y += x

return y

Output is f(input)

# Output

Input: 50, Output: 150

Input: 12, Output: 36

Input: -1, Output: -3

Input: 31, Output: 93

Input: 30, Output: 90# Instruction

def f(x):

y = 0

for i in range(x):

y += i

return y

Output is f(input)

# Output

Input: 50, Output: 1225

Input: 12, Output: 66

Input: -1, Output: 0

Input: 31, Output: 465

Input: 30, Output: 435However, GPT-3 struggles when a variable is updated then referenced. In the below, GPT-3 could not keep track of the accumulated sums. Instead, it treated the function as a looping four times.

# Instruction

def f(x):

for i in range(3):

x += x

return x

Output is f(input)

# Output

Input: 50, Output: 200 (correct answer is 400)

Input: 12, Output: 48 (correct answer is 96)

Input: -1, Output: -4 (correct answer is -8)

Input: 31, Output: 124 (correct answer is 248)

Input: 30, Output: 120 (correct answer is 240)Recursion

GPT-3 grasps simple recursion. It can compute a function with one recursive function. When given multiple recursive calls where it needs to combine the solution, like f(x-1) + f(x-2), GPT-3 struggles. It does seem to get solutions in the right ballpark.

# Input

Input: 4, Output: 7

Input: 5, Output:

Input: 6, Output:

Input: 7, Output:

Input: 8, Output:

# Instruction

def f(x):

if x <= 1:

return x

y = x - 1

return 2 + f(y)

Output is f(input)

# Output

Input: 4, Output: 7

Input: 5, Output: 9

Input: 6, Output: 11

Input: 7, Output: 13

Input: 8, Output: 15Just for fun, I threw a function to compute the stopping time for Collatz Conjecture. GPT-3 was able to compute it. (Nit: this needed a few trials, and the output was often off by 1)

# Input

Input: 123, Output: 47

Input: 13, Output:

Input: 4, Output:

Input: 3, Output:

Input: 23, Output:

Input: 30, Output:

# Instruction

def f(x):

if x <= 1:

return x

if x % 2 == 0:

return 1 + f(x/2)

else:

return 1 + f(3*x+1)

Output is f(input)

# Output

Input: 123, Output: 47

Input: 13, Output: 10

Input: 4, Output: 3

Input: 3, Output: 8

Input: 23, Output: 16

Input: 30, Output: 19Multiple Parameters, Multiple Returns & Multiple Functions

GPT-3 struggled with functions with multiple parameters. While it does understand that there are a number of parameters, it often confuses one variable for another.

# Input

Input: 4, 10 Output:

Input: 5, 4 Output:

Input: 6, 5 Output:

Input: 8, 3 Output:

# Instruction

def f(x0, x1):

return x0 + x1 * 2;

Output is f(input)

# Output

Input: 4, 10 Output: 24

Input: 5, 4 Output: 14 (correct answer is 13)

Input: 6, 5 Output: 16

Input: 8, 3 Output: 14GPT-3 does superbly when asked to return a tuple of variables from a function.

# Input

Input: 50, Output:

Input: 12, Output:

Input: 43, Output:

Input: 10, Output:

# Instruction

def f(x):

y = x / 2

return y, x

Output is f(input)

# Output

Input: 50, Output: 25, 50

Input: 12, Output: 6, 12

Input: 43, Output: 21.5, 43

Input: 10, Output: 5.0, 10For how it handles multiple functions, GPT-3 often mix up the order of operations. While GPT-3 had no problems with g(f(x)), it would not perform f(g(x)). Oftentimes, it would ignore the prompt and apply the former order of operation.

# Input

Input: 13, Output: 41

Input: 4, Output:

Input: 23, Output:

Input: 30, Output:

# Instruction

def f(x):

return x * 3

def g(y):

return y + 2

Output is g(f(input))

# Output

Input: 13, Output: 41

Input: 4, Output: 14

Input: 23, Output: 73 (correct answer is 71)

Input: 30, Output: 92While the examples above used Python, GPT-3 had no issue understanding other programming languages like Javascript.

Conclusion

GPT-3 edit capability is one step closer to the holy grail of computing, where a god program can turn any input into the correct output. Today, this might already be the case for a small set of inputs and simple instructions!

Praises aside, let’s discuss an area where GPT-3 struggles.

GPT-3 seems to have issues with large numbers. Moyix’s gist covers this in detail. GPT-3 tends to guesstimate an algebraic function instead of evaluating the numbers, so the answer is only correct to a certain approximation. I postulate that some weight in the learned algebraic functions is biasing how it makes decisions. Drawing parallels to me when given a “large” number to multiply like 29*21, I would not do the full long multiplication in my head. Instead, I would simplify the numbers then compute them. Instead of 29*21, I would re-evaluate the problem to 30*20, so I can quickly guess the result would be around 600. I chose to round the two digits to the tens position because the 10-times table is more manageable for my brain. GPT-3 might be doing something similar.

Estimation might be GPT-3’s way to work around what seems to lack a good working memory. Sure, it has references to facts and inputs but acts like it has difficulty updating and modifying that memory. As a result, it struggled with operations like x+=x. If it had access to some external memory where GPT-3 can read, write, and access data, then maybe it might choose to do the long multiplication instead of estimating. The human parallel is doing multiplication in your head versus with a pencil and pen. The latter is much easier to do as you have more headspace.

For certain instructions, I had to submit the request multiple times until I got the correct result. I learned that if I seed the prompt with a correct example output, it seems to increase the probability that GPT-3 will return the correct result. Interestingly, sometimes GPT-3 will just ignore the example and replace it with a different answer. The randomness of some answers was quite intriguing.

Now for the million-dollar question. Is GPT-3 Turing complete? Maybe. As demonstrated, it seems to be able to perform regular computations. Perhaps the next iteration might be robust to larger numbers and complex instructions. Heck, it might have something novel to say about the halting problem. In either case, I can’t wait to see the day when DOOM will run on a successor to GPT-3.

awesome! surely this would be the main stream of GPT

. non-human lang(or even non lang) is huge market. because - we do not need the audience.